The problem with “traditional” release management

I say “traditional” release management for the lack of a better categorisation. What I mean by that is having separate phases (and teams) for development, system test, user acceptance test (UAT) and finally production. The problem is that it just takes too long after the developer commits his code until it gets eventually deployed to production. In my experience, this is mostly due to delays in hand offs from one phase to another. And as we all know, thanks to Lean Thinking this is waste.

Why you (as a developer) should care

The deployments to these different environments are usually stressful, because they bare some amount of risk of taking down the system you are deploying to. And the closer you get to production, the higher the penalty for doing so becomes. Because of this risk, deployments usually take place when potential problems would have the least impact on the users of these environments, which in general happens to be out of business hours. And last but not least, deployments tend to be boring and tedious tasks. Or did you ever get excited about doing a release? Yeah, that’s what I thought.

Why you (as a manager) should care

Of course, you also care about the factors mentioned above, because you want your developers to be as happy as possible, right? But furthermore, you especially dislike the risks associated with deployments, because you have to factor them into all sorts of calculations. Additionally, all these delays between phases mean that your time to market for new features and bug fixes is way higher than it needs to be, which means you are loosing money. Constantly.

The obvious solution

It’s easy, right? The answer is continuous deployment. You fully automate your testing and deployment and you deploy straight to production after every commit. Sounds great. The problem is: Most organisations are not ready for full test automation or just not willing to give up manual testing. This may be for security or legal reasons, potential loss of revenue and/or reputation or something else. Bottom line is: Pure continuous deployment into production without any manual testing is not widely accepted. Period. I am not saying this goal is not worth aiming for. Trust me, I am all for it. I just don’t see it happening in the near future in most companies. In my opinion, the only way to practice continuous deployment into production is by having sophisticated real-time alerting combined with a system with built- in resilience. This would allow you to automatically detect and back out a failed deployment without an interruption of the system as a whole. Have a read of this blog post about how the guys at kaChing managed to achieve this.

A step in the right direction

Don’t worry, not all is lost. Even if continuous deployment is not achievable for you right now, that doesn’t mean you can’t improve your situation at all. You can take the first steps towards continuous deployment by decreasing the batch size of deployments and automating the deployment and hand-offs between the environments.

- Decreasing the batch size should be easily achieved by just allowing automatic deployments into your first test environment. Only, of course, after a successful build that runs all your automated tests.

- Automating the deployment may require some tailored scripts to suit your system. However, if you are using Hudson and your deployment artifact is a war or ear file you can use the deploy plugin, which is based on Cargo.

- Automating the hand-offs could be achieved by using a central release management dashboard that is used by developers, testers and managers.

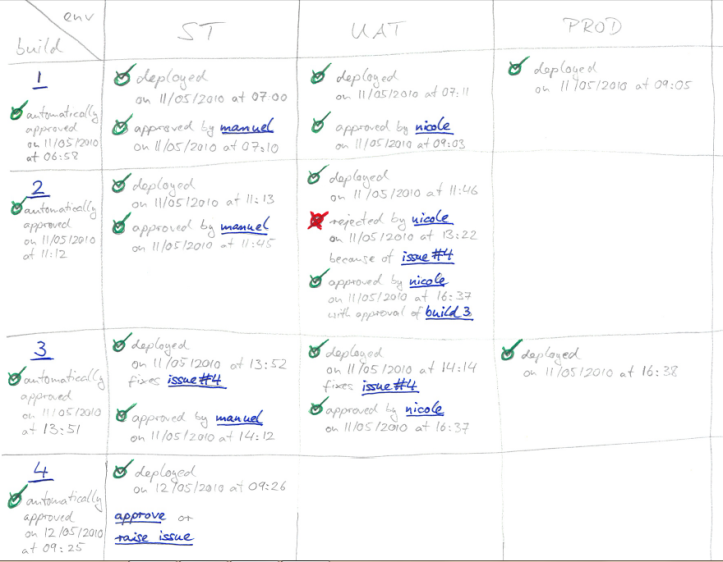

So far I haven’t found any Hudson plugin that would provide this. What I have in mind is something like the following:

- Deployments can be configured to happen automatically or to require manual approval.

- When deployment to an environment finishes, a configured set of people gets notified

- The different environments where the application is running can be accessed directly from the dashboard

- When approving a build, and all previous builds have been approved as well (there might be dependencies), deploy to next environment.

- When rejecting a build

- A tester raises one or more issues for the build. The issue tracking system is tightly integrated in the dashboard and can be reached via a link, which pre-fills relevant fields about the current build and project. This automatically rejects the build.

- The developer that committed the change that triggered the rejected build gets notified.

- The developer fixes the issue (after creating a failing test to expose it, of course).

- The developer commits the fix with reference to the issue in the commit comment. This triggers a new automatic build, plus an automatic update of the issue and a notification to the tester who raised it (like the Trac SVN Policies Plugin).

- When the tester approves the new build the old build gets automatically approved as well, if this was the last outstanding issue for the old build.

In my mind it looks somewhat like this (click image to enlarge):

In order to do pure continuous deployment you could then configure the dashboard with only one environment (=production) with automatic deployment. You can, however, choose to set up as many intermediate environments as you like and also require manual approval of deployments to any of them.

Does anyone know if a Hudson plugin with this functionality exists or is in the making?

With this in place I believe deployments can be non-events, in the meaning of being effortless and stress free. This does not mean you should stop celebrating your releases, though.

Hi Manuel,

this software life-cycle process demand sounds familiar to me. That is a common wish of customers.

In a SOA landscape this topic is known under ‘Governance’. Life-cycle management is part of it. But its not only good for SOA. If your software landscape consist of many artifacts, you need a solution to ‘govern’ it.

Governance tools allow do design a life-cycle process for every artifact. For every process step you can add design time polices. That can be approval policies as you mentioned or notification jobs.

From my experience that is still a very theoretical topic. Although there are software solutions for this, I have never seen any in production. And unfortunately I don’t know an open source solution for it.

In the near future I have the challenge to build up such a life-cycle management for a customer. Maybe I can contribute some hands on experience then.

Hello,

I’m currently using Gerrit (http://gerrit.googlecode.com/) and Hudson for something like this. Current workflow is something like this:

1. Change is pushed into Gerrit

2a. Hudson notices the change and runs a build reporting results to gerrit (http://blog.hudson-ci.org/content/pre-tested-commits-git).

2b. Change is manually reviewed and tested. Reviewers/testers can pull the change for gerrit for testing.

3. If change is good, ie. Hudson job is success and reviewers show green light, change is submitted to Gerrits main repository.

4. Hudson notices a new change in Gerrits main repository and deploys a new version into beta server.

I tried to explain this with flow chart in little blog post http://softwarefromnorth.blogspot.com/2010/04/almost-continuous-deployment-with.html

I’m using beta server because our customers didn’t want to have this on live server. Beta and live server are swapped biweekly, though, meaning that beta comes live one and live server is cleaned.

Hi!

Interesting Idea, but how to automate user acceptance? How to automate security requirements? How to automate interfaces between companies? How to automate updated user documentation? Or production docuemtnation? How to guarantee that release units spanning multiple applications / dev groups with different policies work together well? How about the requirement that no developer ever touch the life systems? If it is automated, this is equivalent to direct access? Who tests the tests? And how?

I don’t mind having a simple & lean release process, but aiming at a fully auztomated process where a developer commit updates the production environment after X automated tests is not a quility target.

Thank you Marc for your interesting pointers. I would like to say a few words to each of them.

I would like to make it clear once more: When talking about automated tests I am not just talking about unit tests, but also about functional tests that test your deployed and running application. Furthermore, doing continuous deployment requires a high level of discipline from a development team that knows what they are doing.

[…] not least I would like a visual presentation of where my builds are at, which I have described in a previous post in more detail. Go‘s deployment pipelines are so far the best solution that I have seen in […]