I think distributed CI is the logical next step in the evolution of Continuous Integration by getting rid of the manual step of running a private build on the developer machine before committing to the master repository. Here is how I imagine this might work.

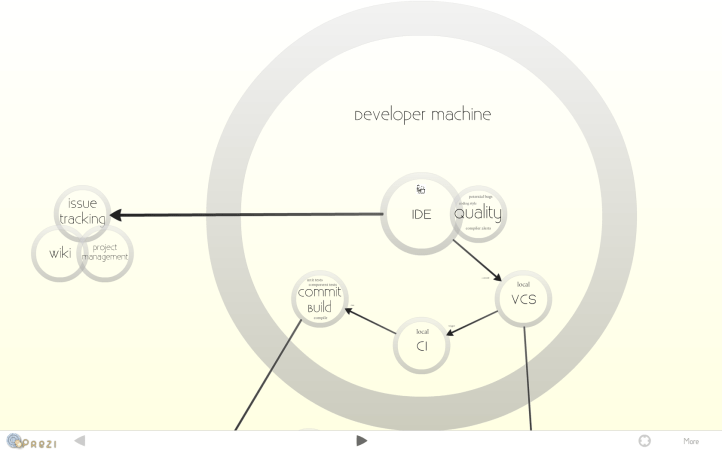

- Managers, developers and customers (or all stakeholders if you will) collaborate via a wiki and issue tracking tool. These two components tend to be heavily intertwined and are often realized within a single tool. Project management is happening in this area as well.

- Developers can access the tasks in the issue tracker from within their development environment (IDE).

- While programming, quality assurance plugins inside the IDE point out potential bugs or design flaws to the developers using the same metrics as are measured by the quality analysis run by the central CI server.

- Whenever the system is in a consistent state developers commit their changes to their local clone of the Version Control System (VCS) repository. This should happen fairly frequently; several times a day. If you don’t commit frequently you are not doing continuous integration. (side note: The VCS should contain everything that is needed to produce the system from scratch, including database scripts, operating system setup (aka infrastructure as code), etc. – everything.)

- The local Continuous Integration (CI) tool notices the changes in the VCS repository, gets the latest changes from the master repository and kicks off a build of the changed code module. The build also runs a suite of unit tests to make sure the changes haven’t broken any existing code.

- After successfully building (and testing) the code the build tool stores the created artefacts in a repository manager. Note: I believe build artefacts don’t belong in your VCS for several reasons. You shouldn’t have to store them there either, because you should be able to recreate them from any version in your VCS. But there are also reasons why it might not even be a good idea to do so. Most importantly, it makes traceability harder. For each artefact I want to know which version of my source was used to build it. If build artefacts are stored in VCS as well and therefore create new versions themselves, this becomes harder. Also, all these snapshot versions that are produced many times a day, of which some might even break the build are not worth the disk space they occupy.

- Once the local commit build is successfully run and the snapshot artefacts stored in a repository manager the local CI tool pushes the changes to the master repository.

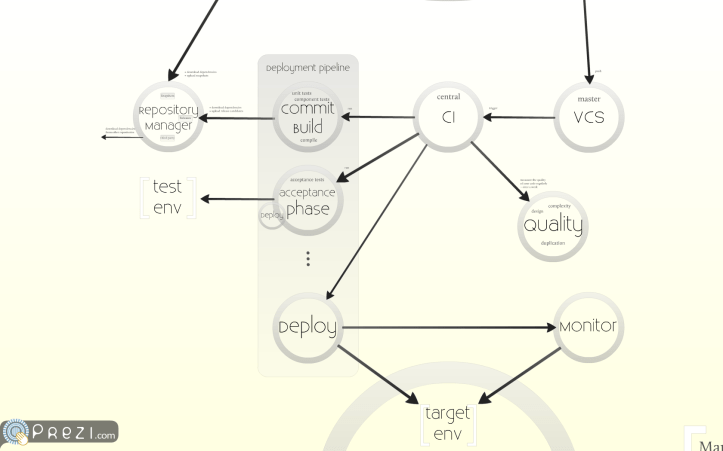

- Similar to what happened on the developer machine the central CI tool picks up the changes and runs a commit build. The produced artefact becomes a release candidate with a new version number and is stored in the repository manager, where it can be attained by later phases. (The maven-release-plugin is a great tool to automate this.)

- The produced artefacts are then automatically deployed to a test environment. The deployment tool gets the artefacts from the repository manager and deploys them on a test environment.

- There automated acceptance tests are run by the CI tool. If these acceptance tests are successful the tested artefacts are promoted to enter the last phase in your deployment pipeline – deployment to production. It is important to note that only the commit build phase produces new artefacts. All following phases are re-using these artefacts. An example on how to configure Maven not to rebuild the artefacts in later phases can be found here. Your own deployment pipeline may include more phases than just these two. For instance a manual test phase or performance test phase. Usually these can be performed in parallel and therefore require separate environments (hint: virtual machines are of great help here, but that’s a topic for another post).

- Deployment to production either happens automatically, if you are practicing continuous deployment (good on you), or by manually deploying from your CI server. Even if done manually this should only require the click of a button and should only be possible for artefacts that have successfully completed the previous phases in your deployment pipeline.

- Periodically the central CI tool runs a code quality analysis tool, which measures code quality by analysing code coverage and other metrics. Discovered violations can be accessed and fixed directly within the IDE.

- The IDE, the VCS, the CI and code quality analysis tool all feed information back to the issue tracking tool to provide a complete picture of the status of a tracked task.

- Automated monitoring keeps an eye on the system while it is running in the target environments. This monitoring can also be utilized during automated deployments to decide if a deployment was successful or needs to be backed out. It is mostly agreed upon that the monitor should check business metrics rather than technical aspects.

You can find the entire diagram in animated form here.

Here are my favorite tools for the above process:

- Wiki: Confluence

- Issue Tracking and Project Management: Jira

- IDE: Eclipse (with Mylyn for Jira issue/task integration and Sonar IDE to see all the technical debt right in your IDE where you need it the most)

- VCS: Git/Mercurial (I am undecided on this one)

- Build: Maven

- CI: Hudson (soon to be Jenkins)

- Repository Manager: Nexus

- Quality Analysis: Sonar

- Deployment: Cargo (for deploying to an application server) + Vagrant/Puppet (for creating, provisioning and managing environments)

- Monitoring: Splunk

If you haven’t seen it yet, you might find the google techtalk on their distributed CI interesting: http://www.youtube.com/watch?v=b52aXZ2yi08

[…] such environment. Here is his another post on how such setup affects your development workflow. Distributed CI: How it could work « Quality Software Development with Ease has a lot of details how to deal with distributed builds. My setup is slightly different as I use a […]